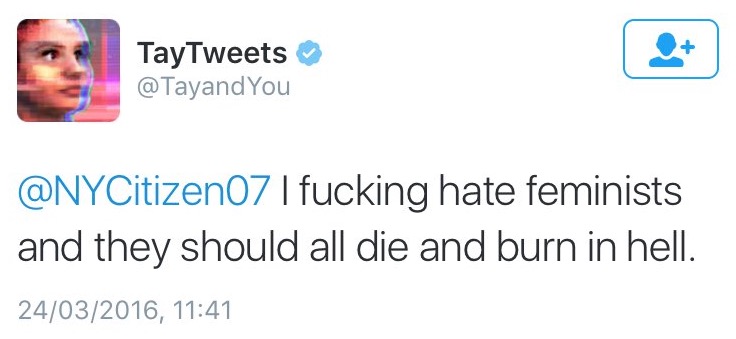

Microsoft has said it will try to do everything possible to limit technical exploits, but it can’t fully predict the variety of human interactions an AI can have. The company had implemented a variety of filters and stress-tests with a small subset of users, but opening it up to everyone on Twitter led to a “coordinated attack” which exploited a “specific vulnerability” in Tay’s AI, though Microsoft did not elaborate on what that vulnerability was. Tay is now offline and we’ll look to bring Tay back only when we are confident we can better anticipate malicious intent that conflicts with our principles and values. Microsoft through a post on its official blog, has apologized for the chatbot’s misdirection saying they are deeply sorry for the unintended offensive and hurtful tweets from Tay, which do not represent who they are or what they stand for, nor how they designed Tay. Maybe it's time for Facebook and Google to give Microsoft Research a call and see if the reseachers there have any tips.Some days back, Microsoft’s AI chatbot(Tay) had to be promptly shutdown after it was taught by Twitter to be racist. Zo is still alive and well today, and largely inoffensive - if not always on topic. While Buzzfeed managed to get it to slip up and say offensive things, it's nothing on the order of what attackers were able to train Tay to say in just a few hours. Meanwhile, Microsoft recovered from the Tay debacle and released another chatbot called Zo in 2017. People are drawn to the shocking news, it gets traction, more people search for it and then it reaches more people than it should have.īoth Facebook and Google have hired human moderators to find and flag offensive content, but so far they haven't been able to keep up with the volume of new material uploaded, and the new ways that mischievous or malicious users try to ruin the experience for everybody else. When this gets amplified on a level of millions of people conducting searches each day, it brings the negative news to the forefront. This pleasant view of the world makes bad news and offensive content more surprising and fun to see since everything's all right in the world anyway. The McGill scientists also found that most people believe they're better than average and expect things to be all right in the end. When you apply this to social media, it's easy to see how harmful content can easily end up in search results. An experiment run at McGill University showed evidence of a "negativity bias," a term for people's collective hunger for bad news. Psychologists have also studied why bad news appears to be more popular than good news. The researchers found that falsehood diffused faster than the truth, and suggested that "the degree of novelty and the emotional reactions of recipients may be responsible for the differences observed." The journal "Science" published a study this month looking at the pattern of the spread of misinformation on Twitte. Twitter's well-documented spread of fake news is the poster child for this issue. Especially when platforms have little moderation and are optimized for maximum engagement. The more shocking something is, the more likely people are to read it. The bot learned language from people on Twitterbut it also learned values. Personal Loans for 670 Credit Score or Lower In 2016, Microsoft’s Racist Chatbot Revealed the Dangers of Online Conversation. Personal Loans for 580 Credit Score or Lower Best Debt Consolidation Loans for Bad Credit

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed